From Visibility to Reasoning: The New Rules of Search in the AI Era

10 talks, one message repeated over and over: SEO isn’t dying, but it’s turning into something else. The fight to appear in a list of 10 blue links is fading. Now we’re also competing to be picked as evidence inside AI pipelines that retrieve, verify, and synthesize information in milliseconds.

Here’s what I’m taking away from Day 1, the day dedicated to “the science” — first the top 5 ideas, then a talk-by-talk recap.

TL;DR — The 5 ideas tying together Day 1 of #SEOWeek 2026

- Ranking is no longer the battle; eligibility is. Before anything gets ranked, AI systems filter what even makes it into the candidate set. If your content isn’t mathematically close to the query, it doesn’t get crawled, scored, or considered. Everything we’ve been optimizing for happens after that first layer.

- The unit of competition is the passage, not the page. AI breaks your content into chunks, turns them into vectors, and evaluates each claim independently. A whole page can be solid and still lose because its individual passages don’t deliver information gain. Every sentence has to earn its place.

- Your brand is a mathematical object: the centroid. The set of all your content forms a midpoint in vector space. That centroid is your brand’s real identity to the AI — not your homepage, not your brand book. If your assets send contradictory signals, the centroid scatters, you blur into the competition, and you stop being retrievable.

- The new metric is confidence, not volume. Re-rankers reward certainty and punish ambiguity (vague language, hedging, unsupported claims). More content isn’t the answer; better structure is. Fresh data, clear entities, knowledge graphs, consistent unique identifiers, and verifiable citations are what move the needle.

- 2026 SEO is engineering and business, not tactics. Commercial tools have fallen behind doing lexical math with an AI wrapper on top. The edge belongs to professionals who build their own tools, master model context, and know how to translate all of this into money. Classical attribution is broken, and you have to learn to sell impact with fuzzy math.

The 10 talks of Day 1 at #SEOWeek 2026, one by one

A short summary of each talk, in the order they were given.

1. Krishna Madhavan (Microsoft)

Speaker: Krishna Madhavan, Microsoft Talk: The Invisible, Converged Web: Architecting Visibility for the AI Economy

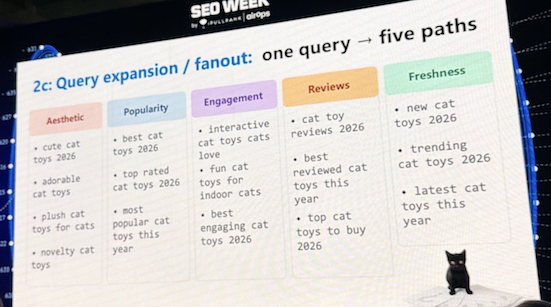

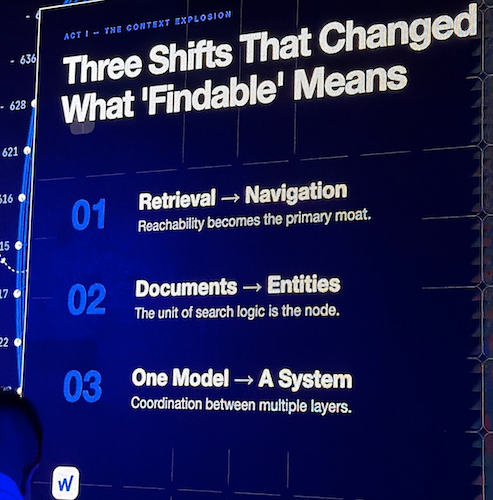

Krishna opened the day with the most complete map of how a query actually travels inside an AI system. Every search goes through a shared DNA — understanding, transformation, expansion (query fanout), multi-route retrieval, deduplication, ranking, and eligibility filtering — and only then does it branch. The classic branch generates snippets in three steps; the grounding branch enters a much more demanding phase of evidence selection and cross-model verification, where one AI literally critiques another AI’s claims before citing them.

His practical message: if engines can’t read your content, there’s no visibility. Semantic headings, careful JavaScript, IndexNow to notify real changes (it already powers more than 50% of new URLs in Bing and Copilot), and surgical use of data-nosnippet to control which parts you give to the AI without losing rank. He also dropped a sneak peek: Microsoft is preparing features that will surface the exact intent of grounded queries, topic mapping, and a citation share metric inside Bing Webmaster Tools.

Follow the author:

2. Andrea Volpini (WordLift)

Speaker: Andrea Volpini, WordLift Talk: Beyond the Million-Token Window: TurboQuant and RLM-on-KG

Andrea attacked a widespread belief: that models with massive context windows solve the memory problem. Even though we’re now talking about near-infinite capacities, the “lost in the middle” phenomenon persists — models still ignore what sits in the center of long prompts — and computational cost grows quadratically. More context isn’t the answer. Better navigation is.

His proposal: turn unstructured information into entity-based knowledge graphs, with RDF triples and consistent unique identifiers. In his recent research, structured data on its own barely moves the needle in Vertex AI, but when used to generate logically connected entity pages, retrieval precision jumps 20%. Combined with Recursive Language Models on graphs, his team measured up to a 71% improvement over GraphRAG on questions that require reasoning over scattered evidence. The line worth framing: “it’s not a volume game, it’s a connection game.”

Follow the author:

3. Mike King (iPullRank)

Speaker: Mike King, iPullRank Talk: F*ck It, I’ll Do It Myself

Combative talk from Mike, calling out that most commercial SEO software is still doing lexical math (TF-IDF, BM25) with a ChatGPT wrapper on top, while Google has been running on semantic search since 2013.

The industry has ignored both the Google leaks and the findings from the DOJ antitrust trial (more than 14,000 data points), and keeps selling PageRank approximations when vector embeddings are infinitely more important today.

We’re missing confirmed metrics, public embedding indexes, hybrid models, and tools that monitor HTTP 499 codes (when an AI agent cancels the request because your server takes too long).

His response wasn’t a complaint — it was a full open-source toolkit:

- Search Telemetry Project (crawler with 3,000 metrics extracted from the leaks)

- Vactor (distributed embedding network)

- Vector Workstation (3D topic maps)

- Q4ia Cloud

- Agent Skills Manager

Recommended stack: N8N, Ollama, Gemma for embeddings, language-track for chunking.

His closing question: are you playing to win, or playing to play?

Sounds familiar to me because… take a look at my website’s homepage :)

Follow the author:

4. Noah Learner (Sterling Sky)

Speaker: Noah Learner, Sterling Sky Talk: Build the Tool Your Team Actually Needs

Noah took Mike’s idea to the practical level by showing SEO Loop, a platform he built single-handedly with Claude Code to solve a real pain agencies suffer (client churn).

Instead of reports with impressions and rankings, it translates search volume directly into a revenue formula like volume × CTR × AOV × conversion rate × lead rate, and connects it to executive dashboards, automatic opportunity discovery, and quadrant-based topic maps.

Technical advice (which echoed across other talks): master the LLM context. Always work within the first 20% of the context window, condense when switching sessions, keep your CLAUDE.md to the bare minimum.

His strict rules (500-line cap per commit, no weekend deploys, mandatory “shape” tests) dropped his error rate from 80% to 15%.

The underlying point: an SEO who is an expert in their domain + AI can match the output of teams of 5 to 20 people.

Follow the author:

5. Annie Cushing

Speaker: Annie Cushing Talk: From Vibes to Veteran: 7 Tips to Disaster-Proof Your Code

Annie complemented the security side with a tour of real disasters from the past year:

- production databases wiped by agents

- “123456” passwords on AI tools holding data of 64 million candidates

- exposed source code

- deleted email inboxes

Her message wasn’t alarmist — it was about adding discipline and treating AI like a new employee who needs explicit rules.

Her practical playbook covers five fronts:

- Structure and compliance (never credentials in code,

.envfiles, a rules file in.md) - Modular design (from a 6,000-line file to 32 well-structured directories, CSS variables for the brand)

- AI control (one or two attempts max, then strategic logging — never let it guess in a loop)

- Mastery of Chrome DevTools (Capture Node Screenshot, the Computed tab, copying outerHTML as a reference)

- Failure prevention (stability freezes, redundant backups, mandatory CI/CD)

Her advanced technique: fan-out / fan-in, using a powerful model as orchestrator and cheap agents in parallel for bulk tasks.

Follow the author:

6. Dale Bertrand (Fire and Spark)

Speaker: Dale Bertrand, Fire and Spark Talk: Prioritize Investments by Financial Impact

Dale opened with the best (and most adorable) analogy of the day: his son’s lemonade stand, where the boy ran an A/B test (his two-year-old sister vs. the family dog Ginger) without caring about statistical rigor, because all he wanted was money for a Nintendo Switch.

That’s the conversation with leadership. Traditional SEO metrics are precise but useless — up to 70% of AI-driven traffic lands in GA4 as “direct,” with no possible attribution.

His solution is the 3 T’s framework:

- Tight metrics (aligned with what leadership cares about: revenue, payback, CAC)

- True metrics (directionally correct even if imperfect)

- Translated metrics (in the language of business)

And fuzzy math as the main tool.

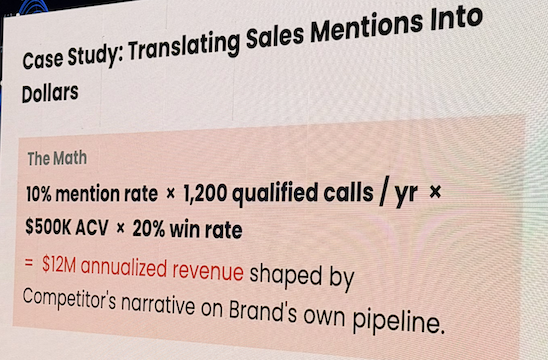

Real case: a competitor’s comparison page was getting only 40 clicks a month according to the tools, but it appeared in 64% of AI answers, and 10% of leads mentioned it on calls. Multiplying by 1,200 annual leads, $500,000 USD contract value, and 20% close rate, the risk was $12 million. The CFO approved the budget in the next meeting.

Follow the author:

7. Jori Ford

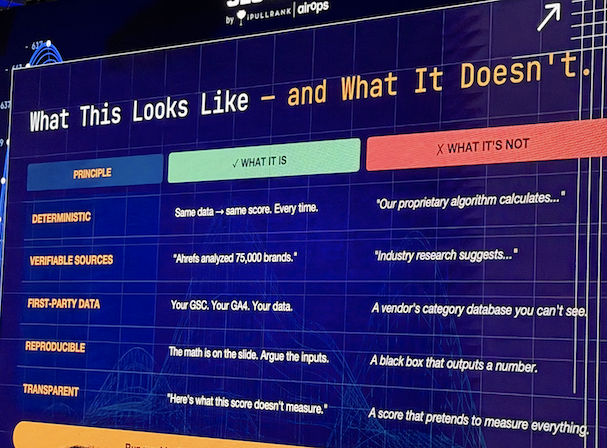

Speaker: Jori Ford Talk: HEO: The Hybrid Engine Score

Jori proposed the in-house tool that holds the entire conversation with leadership together: the HEO Score (Hybrid Engine Optimization), a weekly, reproducible scoring system that measures five signals:

- Presence

- Prominence

- Citation Quality

- Validation

- Business Impact

Run against a closed group of 40 seed queries (not invented ones) pulled from real sources like Google Search Console, sales, support, and reviews, the goal is to stop hopping between 20 tools with subjective metrics and consolidate into a single governance framework.

The model lets you choose a strategic profile (Awareness, Conversion, or Authority) that weights the signals, and incorporates a diagnostic protocol that only triggers investigation when there’s a real “signal collapse,” defined as a 3-point drop.

Her case with Aspen Group revealed findings impossible to detect with isolated metrics: local queries weighted four times more in LLMs than in Google, and the threshold rating for specialized recommendations (e.g., a dentist) was already higher than the classic 4.6.

Discipline, says Jori, cuts analysis down to about 2 hours a week and generates the kind of executive trust that survives any algorithm change.

Follow the author:

8. Metehan Yeşilyurt

Speaker: Metehan Yeşilyurt Talk: Everything Is an Entropy Game: What Dies Between Retrieval and Citation

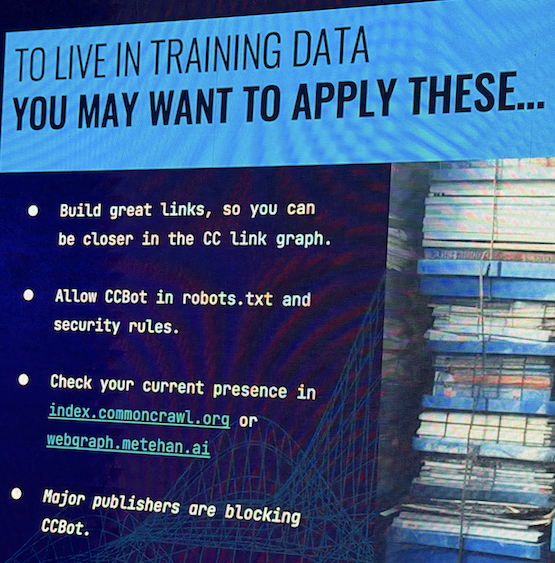

Metehan spent a year reverse-engineering real configurations of ChatGPT, Perplexity, and Gemini, and came back with a powerful thesis: everything is an entropy game.

Today’s re-rankers are, in essence, confidence machines that score how much a passage reduces the answer’s uncertainty. Vague language, hedging phrases, conditional content, and languages with inefficient tokenization (non-English alphabets, emojis) all increase entropy and get penalized.

What’s reliable, fresh, and structured wins — which explains why mediocre but consistent pages outperform brilliant but ambiguous ones.

He also brought an uncomfortable data point for many publishers: in his experiments with massive programmatic SEO, AI bot traffic (GPTBot, ChatGPT-User) is already exceeding traditional Google organic traffic in plenty of ecosystems.

Blocking CCBot from Common Crawl to “protect” your content is a strategic mistake — it kicks you out of the algorithmic center of gravity from which the next generations of models are being built.

Worth considering: audit your logs for 4xx errors hitting AI bots, because sometimes fixing a single crawl issue triggers visibility gains.

Follow the author:

9. Scott Stouffer

Speaker: Scott Stouffer Talk: Is AI Seeing the Brand You Think You’ve Built?

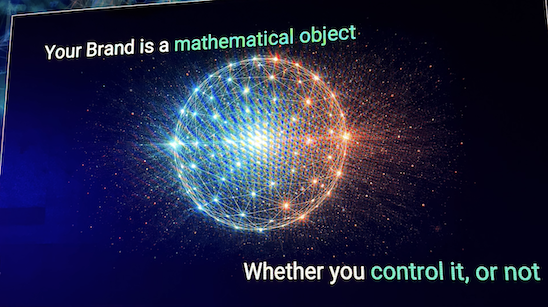

Scott delivered the most conceptual idea of the day, and for many the most revealing: your brand exists as a mathematical object inside AI systems.

Each fragment of your content becomes a vector, the vectors group into clusters of meaning, and the midpoint of all of them is the centroid.

That centroid is your brand’s real identity to the AI, regardless of what your homepage, brand book, or tone-of-voice guide say.

The problem is convergence: since almost every brand follows the same best practices and uses the same sources and structures, their centroids collapse into the same region of space — so to the AI, they’re substitutable.

And being substitutable is the biggest risk, because the system only retrieves a couple of representatives and discards the rest. The solution isn’t publishing more, it’s publishing differently — finding the angle that pulls your centroid out of the collision zone.

And abandoning static optimization in favor of a continuous cycle of measure, adjust, and measure again, because every new piece tugs slightly at the centroid, and without control it drifts until the brand identity fragments.

Follow the author:

10. Jeff Coyle (Siteimprove)

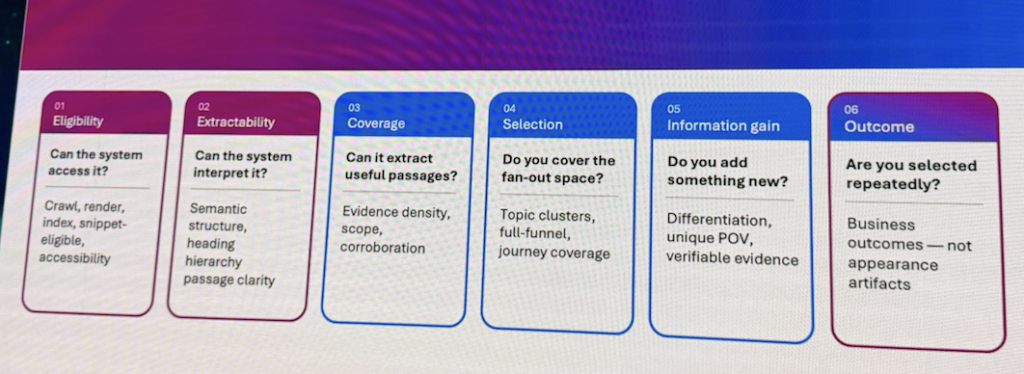

Speaker: Jeff Coyle, Siteimprove / ex-MarketMuse Talk: From Showing Up to Winning

Jeff closed the day pulling the thread between every previous idea. According to him, measuring appearances and citations isn’t enough — you have to understand the mechanism.

Technical eligibility (crawlability, rendering, semantic headings, accessibility) is the first barrier, and most AI search failures start there.

He illustrated it with a real case from a large healthcare organization that published “myths about diseases” articles with poorly structured headings — and the AI ended up attributing those myths to them as if they were endorsing the myths as real causes. A multi-billion-dollar PR crisis, contained in days thanks to monitoring.

Once eligibility is cleared, Jeff explained that Google no longer runs a linear search but executes query fanout (up to 15 parallel queries with 20 results each), forming a pool of 600 candidates to cover the entire user journey.

That’s where the passage competes — not the page. Every claim is evaluated separately, and if it doesn’t deliver information gain or can’t be corroborated, it’s discarded and drags its neighbors down with it.

The shortcut of slapping FAQ blocks with schema at the bottom of the page is a trap — sometimes you need to build 12 robust articles to move the selection of a single passage.

His closing line: by 2026, every passage matters.

Follow the author:

Soy MJ Cachón

Consultora SEO desde 2008, directora de la agencia SEO Laika. Volcada en unir el análisis de datos y el SEO estratégico, con business intelligence usando R, Screaming Frog, SISTRIX, Sitebulb y otras fuentes de datos. Mi filosofía: aprender y compartir.